Should AI Write in Your Voice A Decision Matrix for Coaches Founders and Solo Experts

The Real Trade-Off: Ship Faster vs Stay Believable

Using AI to write in an expert’s voice is not a debate about humans versus machines, it is a test of signal versus synthetic.

Use AI in your voice when the point of view is already sharp, a real review habit exists, and clear standards govern what gets published. Avoid AI-in-your-voice when positioning is still forming, drafts ship without review, or content strategy is basically “post something and hope.”

AI becomes a net gain when the point of view is already clear, the standards are already real, and someone is willing to review what ships. In that world, speed amplifies credibility because every new post reinforces the same ideas across more surfaces.

AI becomes a net loss when positioning is still fuzzy, publishing is still impulsive, and “review” is more aspiration than habit. In that world, speed does not buy growth, it buys confusion. The audience can feel the drift, even if it cannot explain it.

So the decision is straightforward. If the goal is “more output,” almost any tool will cooperate. If the goal is durable authority, the constraint is believability, and believability is a system.

The framework below turns that system into six variables. Score them honestly, then let the math make the choice.

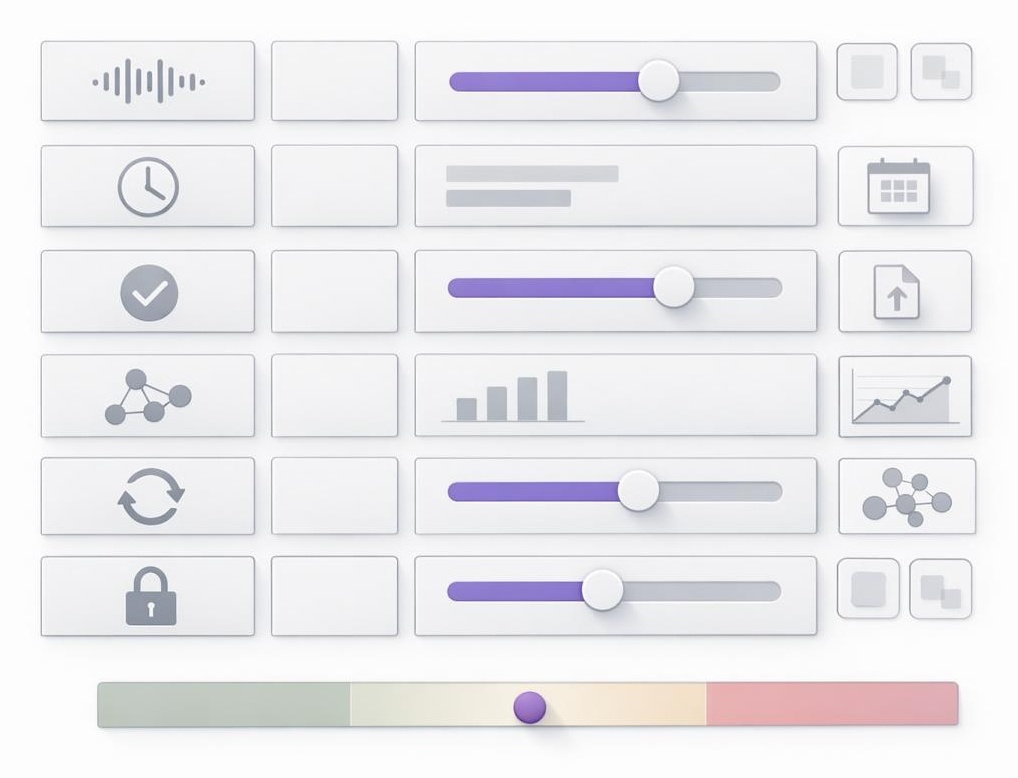

The 6-Factor Voice Decision Matrix (Score Yourself in 5 Minutes)

The safest way to think about “voice” is not tone or vocabulary. Voice is the consistent logic an audience comes to trust, what gets emphasized, what gets dismissed, what gets named plainly. When AI writes in a voice, the real question is whether the underlying logic stays intact when volume increases.

Start by scoring each factor from 1 to 5 (1 means fragile, 5 means strong). Add the scores for a total between 6 and 30.

Begin with voice fidelity risk, the cost of sounding unlike the expert. High risk shows up when the business sells judgment and trust, for example coaching, advisory work, and founder-led positioning, where one “off” paragraph can read like a stranger borrowed the account. Low risk shows up when the content is primarily educational and the brand can tolerate more neutral phrasing without breaking trust.

Next is time cost, and it is not “time to write,” it is time lost to invisibility. A high score means content is a meaningful bottleneck, expertise exists, but publishing is inconsistent and opportunities leak out the sides. A low score means the current cadence is already sustainable, or content is not the limiting factor.

Then comes review and control, the ability to approve, edit, and enforce standards before anything goes live. A high score means a real workflow exists, drafts are inspected, and off-voice content gets rejected without drama. A low score means content goes out unreviewed, or editing happens only when there is spare time (which quietly means it does not happen). This one is the hinge, because control is what turns AI from a slot machine into a system.

After that, score cross-channel coherence, whether ideas connect across platforms or fragment into disconnected posts. A high score means there is a stable set of pillars, language stays consistent, and each piece feels like part of a larger argument. A low score means the message shape-shifts by channel, chasing whatever the platform rewards that week, like changing outfits for every room and calling it an identity.

Add feedback loop strength, how quickly publishing turns into learning. A high score means there is a cadence for noticing what resonates, updating the message, and iterating with intention. A low score means content is posted and forgotten, and the brand keeps paying tuition without collecting the lessons.

Finally, include security and IP comfort, the comfort level around what gets shared with tools and what must stay private. A high score means boundaries are clear, never including client identifiers, private notes, or proprietary frameworks verbatim. A low score means uncertainty about what is safe, which either creates risk or creates paralysis.

Now interpret the total:

- Green (24 to 30): AI can write in the voice with low risk, assuming review stays real.

- Yellow (17 to 23): AI can help, but only inside guardrails and a tighter workflow.

- Red (6 to 16): AI will likely magnify the wrong things, fix positioning and governance first.

A non-obvious truth sits underneath the scoring. Model quality is rarely the deciding factor. Review and control is. The best system still fails if nobody is accountable for what ships.

Green Zone: When AI in Your Voice Compounds Authority

A green score usually means something important is already in place, not a tool, but a backbone. The audience already knows what the brand stands for, and the content has a recognizable spine, not just a recognizable style.

The clearest sign is crisp positioning. The audience can summarize what the expert believes in a sentence, and that sentence does not change depending on the day or the platform. That clarity gives AI something to amplify instead of forcing it to improvise.

The next sign is repeatable ideas. There are concepts that show up again and again because they are true in the business, not because they are trendy in the feed. When content is built from those pillars, volume starts to behave like interest, each new piece reinforces the last.

Green-zone operators also have a real editorial reflex. Off-voice drafts get cut. Vague drafts get sharpened. Safe-but-generic drafts get rewritten. That willingness to say “no” is what keeps AI from turning into an agreeable ghostwriter that flatters everyone and persuades no one.

The practical move is to start with a small surface area, one channel and one format, then lock a review cadence that cannot be skipped. Consistency beats intensity, but only when consistency stays coherent, otherwise it is just consistent noise.

Red Zone: When AI Will Quietly Destroy Trust

A red score does not mean AI is “bad.” It means AI will accelerate ambiguity, and ambiguity is the silent killer of authority. The internet is already full of content that sounds fine, reads smoothly, and leaves nothing behind.

The first failure mode is fuzzy point of view. When the business cannot clearly state what it stands for, AI fills the gap with culturally available language, the phrases everyone has already seen. The result is content that sounds competent but feels interchangeable, which is the opposite of authority.

The second failure mode is governance-free publishing. If drafts go out without review, brand drift is not a possibility, it is a guarantee. Voice becomes a moving target, and the audience pays the price in extra cognitive load. People do not follow what they must constantly re-interpret.

The third failure mode is cross-channel chaos. When each platform gets a different version of the message, the brand stops feeling like a thinker and starts feeling like a performer. It becomes content fast fashion, lots of outfits, no wardrobe. The hidden cost is a credibility tax, fewer referrals, weaker conversion, and a long climb back to trust.

The fix is not “use AI more carefully.” The fix is to clarify positioning, define standards, and build a review habit before scaling output. AI should multiply signal, not manufacture it.

The Control Model: Keep the Voice, Scale the Volume (How Inkflare Fits)

Authority is engineered through consistent signals, not sporadic inspiration.

That is why the strongest model is human-directed leverage. The expert owns the point of view and the final judgment. Inkflare operationalizes the system around it, so visibility stops depending on perfect energy and spare time.

In practice, the workflow is simple. Inputs capture real thinking (notes, long-form ideas, lessons learned). Draft generation turns those inputs into publishable assets. Human review enforces voice and standards. Publishing makes the message show up consistently. Measurement creates a feedback loop, so the content gets sharper over time rather than merely louder.

Cross-channel coherence is the multiplier most people miss. One idea should not become five disconnected posts. It should become an interlinked ecosystem where each piece strengthens the others, building durable discoverability across social feeds, search engines, and AI-driven discovery surfaces.

Security and IP comfort belongs inside the system too. Client details, private examples, and proprietary frameworks should stay out of prompts and drafts. Public content can teach principles, distinctions, and decision logic without leaking the crown jewels.

The decisive insight is this. AI writing is not a shortcut to authenticity, it is a test of governance. When control is real, speed can compound trust. When control is absent, speed only compounds noise. Which system is being built?