Should Leaders Rewrite Their Purpose Statement for AI Agents

Purpose in the AI-agent era: keep the slogan, or rebuild the operating system?

Leaders should rewrite a purpose statement only when it fails at its real job: governing decisions when tradeoffs get tense. If purpose already reduces conflict, speeds decisions, and guides what gets measured, keep it. If it reads well but does not steer behavior under pressure, refine it. If it is generic enough to fit any company and specific enough to help nobody, rebuild it.

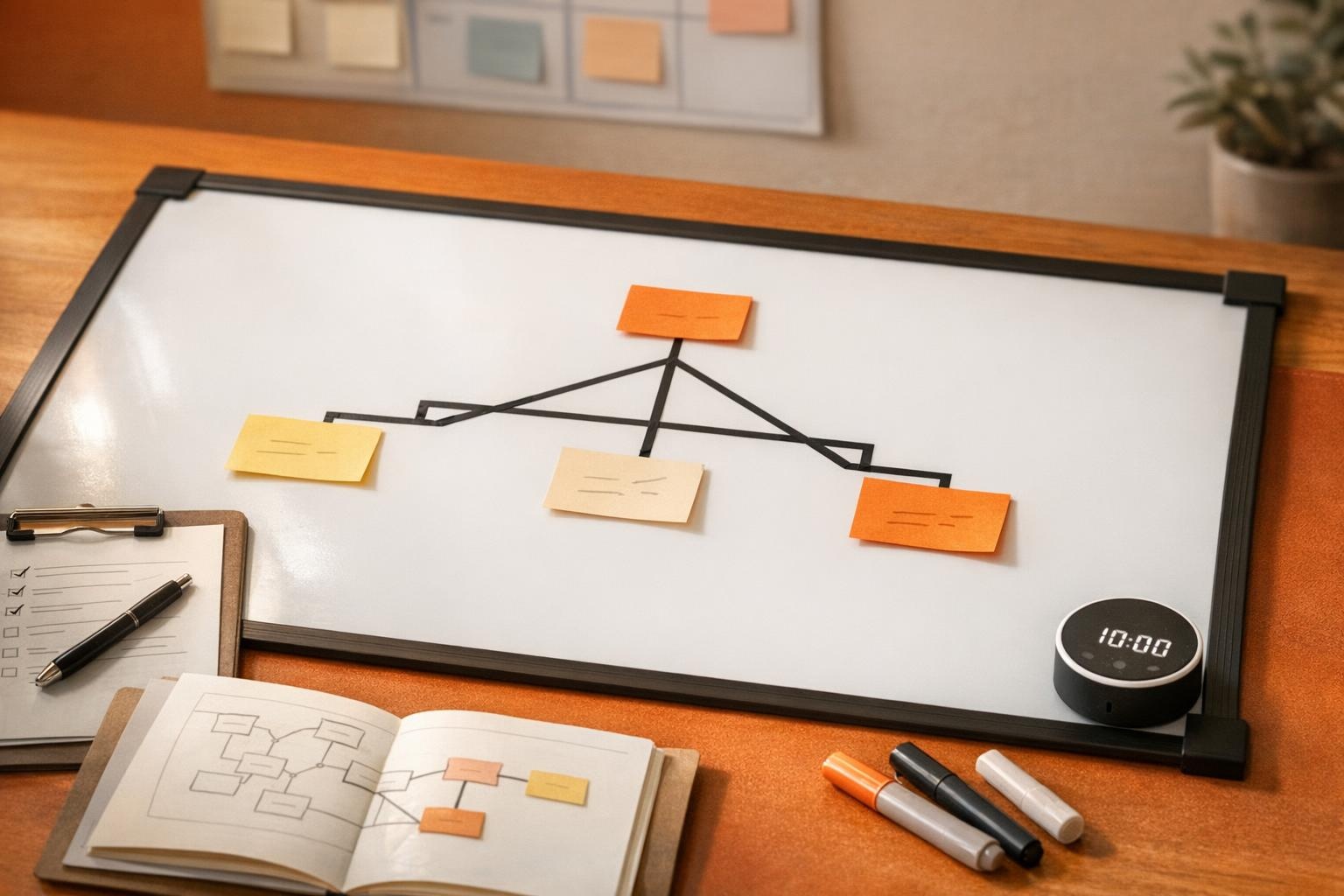

This is not about pleasant words written on a piece of paper. Purpose is closer to governance than poetry. A purpose statement is an operational artifact that should reduce decision latency, resolve cross-functional conflict, and steer metric selection. In a healthy organization, it functions like a load-bearing beam, holding shape when the room gets crowded and priorities collide.

The tell is rarely a lack of ambition. The tell is operational: cross-functional conflict that never resolves, decision latency that turns simple calls into week-long threads, metrics that reward activity instead of outcomes, and managers who spend their attention budget arbitrating exceptions. When those four signals spike, a purpose statement is no longer a poster. It is an outdated operating system.

For teams that want a structured reset process, the 14-day approach in The Purpose Discovery Sprint That Turns Meaning Into Execution in 14 Days works well as a companion tool. The decision still comes first: keep, refine, or rebuild.

When OKRs and metrics are enough (and when they are the trap)

OKRs and metrics are excellent coordination tools, but they are unreliable judges in value conflicts. Metrics can tell a team what is moving. They struggle to tell a team what matters when two “good” numbers point in opposite directions.

A familiar scene: a dashboard looks healthy, the calendar is full, and yet something feels off. Handoffs get sloppy. Customer experience gets traded for speed. People are “aligned” in meetings and misaligned in execution. This is how the activity trap forms. When AI increases throughput, that trap gets deeper because output becomes cheap. The risk shifts from “not enough work” to “too much work that does not count.”

Doubling down on OKRs makes sense when the environment is stable enough that measurement can carry governance. That is usually true when definitions do not change weekly, conflict is low, and leaders have enough managerial capacity to resolve edge cases quickly. In those conditions, metrics act like a well-tuned instrument panel. It is still driving, but the gauges help.

Metrics become a proxy religion when they expand faster than clarity. Signs include metric sprawl, local optimization disguised as progress, teams gaming targets, and dashboard inflation where every number is “up and to the right” while decision quality quietly decays. Purpose is the antidote, not because it replaces metrics, but because it tells metrics what to optimize for, and what not to sacrifice.

A simple rule holds: metrics manage performance, purpose governs priorities and tradeoffs.

The Decision Tree: keep, refine, or rebuild your purpose statement

A purpose statement earns its keep when it makes tradeoffs easy to explain and hard to evade. This decision tree routes leaders to the lightest intervention that restores purpose as a decision governor, using four observable signals.

Start with cross-functional conflict. If teams repeatedly escalate the same disagreements, prioritization fights keep reappearing under new names, or delivery slows because nobody wants to “lose,” that is rarely a process problem. It is a governance vacuum. Move directly into a purpose clarity check: can the current statement settle a real tradeoff, or does it politely nod at every option?

If conflict is low or resolves cleanly, move to decision latency. Watch for approvals that loop, decisions that require three meetings to say “yes” to what everyone already agreed to in writing, or “quick questions” that become week-long threads. When latency is high, the issue is often missing rules for irreversible decisions. That is a governance check, not a faster standup.

If decisions move quickly, examine activity-trap risk. The warning sign is not low output, it is high output with weak outcomes. Leading indicators are fuzzy, teams optimize what is easy to count, and AI makes it effortless to ship more of the wrong thing. When that pattern shows up, purpose usually needs refinement so metrics stop rewarding motion over progress.

Finally, assess manager capacity. When managers are overloaded, exceptions pile up, and every edge case requires a human referee, the system is signaling that it lacks clear defaults. In that situation, a tighter purpose can reduce exception-handling. If the purpose is too broad to create defaults, a rebuild is often the fastest path back to calm execution.

Three outcomes follow.

Keep when purpose already functions like a structural beam. Tradeoffs resolve without theater, teams use the same language for success, and metrics ladder into shared intent. Next step: a 60-minute language audit to remove jargon and sharpen the “so what.” Timebox: one week.

Refine when the core intent is right, but the statement lacks usable boundaries. Next step: a two-week sprint to add explicit tradeoff rules and definitions, then lock them into a single decision memo template. Timebox: two weeks.

Rebuild when purpose is generic, contradictory, or disconnected from how work gets prioritized. Next step: a 30-day rebuild tied to real decisions, not a wordsmithing marathon. Timebox: 30 days.

The test is practical: if purpose is paint, it looks good and changes nothing. If purpose is structure, it changes what gets built.

The Silo Stress Test for AI-era handoffs

Purpose must survive handoffs to be real, and AI increases the number and speed of those handoffs. A team can sound aligned inside a function and still fracture the moment work crosses into another function, another tool, or an AI agent operating on a narrow objective.

Run a silo stress test on one workflow that now includes AI, such as demand generation to sales, support to product, or data to marketing. Pick a single initiative that matters enough to reveal tradeoffs, but small enough to map in one meeting. Then ask each silo, separately and without syncing, to answer three prompts.

First: What is success? Second: What gets sacrificed first when time gets tight? Third: What is the escalation rule when metrics conflict? The answers do not need to be long. They need to be honest, and they need to be written as if nobody gets to hide behind “it depends.”

Compare the responses side by side. If the answers converge on the same tradeoff logic, purpose is governing. If the answers diverge, purpose is decorative. The divergence can be subtle. One team defines success as speed, another defines it as quality, a third defines it as margin, and the AI agent optimizes the metric it can see, not the intent it cannot.

This is where AI-era failure modes show up without drama. Agents optimize local objectives because that is what they are designed to do. Humans defer to dashboards because dashboards feel certain. Exceptions multiply because the system lacks a shared rule for “what matters more.” Handoffs lose intent, and rework becomes the tax paid for ambiguity.

A clear pass criterion is simple: separate teams, asked separately, describe the same sacrifice order and the same escalation trigger. A clear fail criterion is equally simple: success changes by team or tool. When the test fails, the fix is not more meetings. The fix is a purpose statement that behaves like guardrails, so handoffs keep direction even when speed increases.

If you refine or rebuild: a 30-day operating plan that makes purpose executable

Purpose becomes usable when it shows up as defaults in the system, not as inspiration on the wall. A 30-day plan works because it forces purpose to attach to decisions, workflows, and measurement, which is where good intentions either compound or evaporate.

Week 1 is diagnosis with receipts. Inventory where conflict repeats, where decisions stall, and where rework clusters. Capture the recurring tradeoffs people argue about in private but avoid naming in public. Then list the top five irreversible decisions that keep appearing, those are where governance is needed most. If the same choice keeps getting re-litigated, that is not nuance, it is missing structure.

Week 2 is drafting purpose as rules. Write what the organization optimizes for, what it will not optimize for, what tradeoffs are acceptable, and what lines cannot be crossed. The goal is not moral purity, it is decision speed without regret. A useful purpose does not eliminate hard calls, it makes the hard call repeatable.

Week 3 is translation into system hooks. Install one decision memo template that asks which tradeoff rule is being applied. Define an escalation path that reduces thrash. Prune metrics that reward motion over progress, and favor lead measures over lag trophies. If AI can generate ten options in ten seconds, the bottleneck becomes selection, not production.

Week 4 is rollout through two pilots, one human-heavy workflow and one AI-heavy workflow. Measure cycle time, rework, and exception volume. If purpose is working, those numbers improve without a heroic push because the default got better. If those numbers worsen, that is valuable too, it means the new “beam” is not aligned with where the weight actually sits.

The quiet takeaway is freeing: treat purpose like governance infrastructure, then let OKRs do what they do best. Pick one workflow, run the silo stress test, and see whether the organization is running on shared intent or shared busyness.